Social signals are the fingerprints people leave all over the internet. The reactions. The throwaway replies. The inside jokes. The silence under a post that should have blown up.

In a feed that never stops moving, those signals are some of the clearest clues we get about what’s actually landing.

AI helps. It turns chaos into dashboards. It counts, clusters, and summarizes. It flags spikes at 2:13 a.m., indicating something unusual is happening.

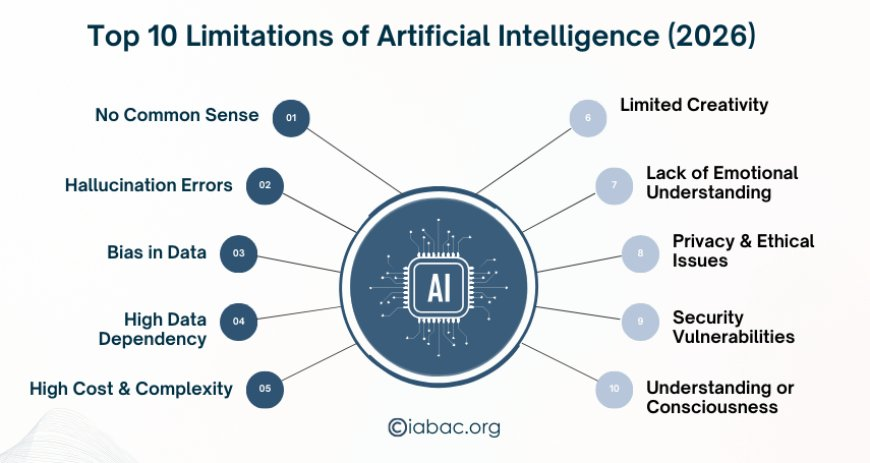

But it still misses things that matter.

We’re well past the hype in 2026. We know AI can scale better than any human team. But what it struggles with is the messy part: sarcasm, shifting norms, off-platform context, the tiny sparks inside niche communities.

That’s where human strategists earn their keep.

If you run social, insights, or brand, this isn’t theoretical. You have to know which signals you can trust to automation and which ones still need a person who understands how the internet actually behaves.

Understanding Social Signals: Beyond the Algorithms

In practice, social signals fall into two buckets.

The clean ones: likes, shares, saves, watch time. They’re structured. Easy to trend over time. AI is excellent here.

Then there’s everything else.

Humor. The energy of a comment section. The difference between curiosity and quiet frustration.

That’s where brand meaning lives.

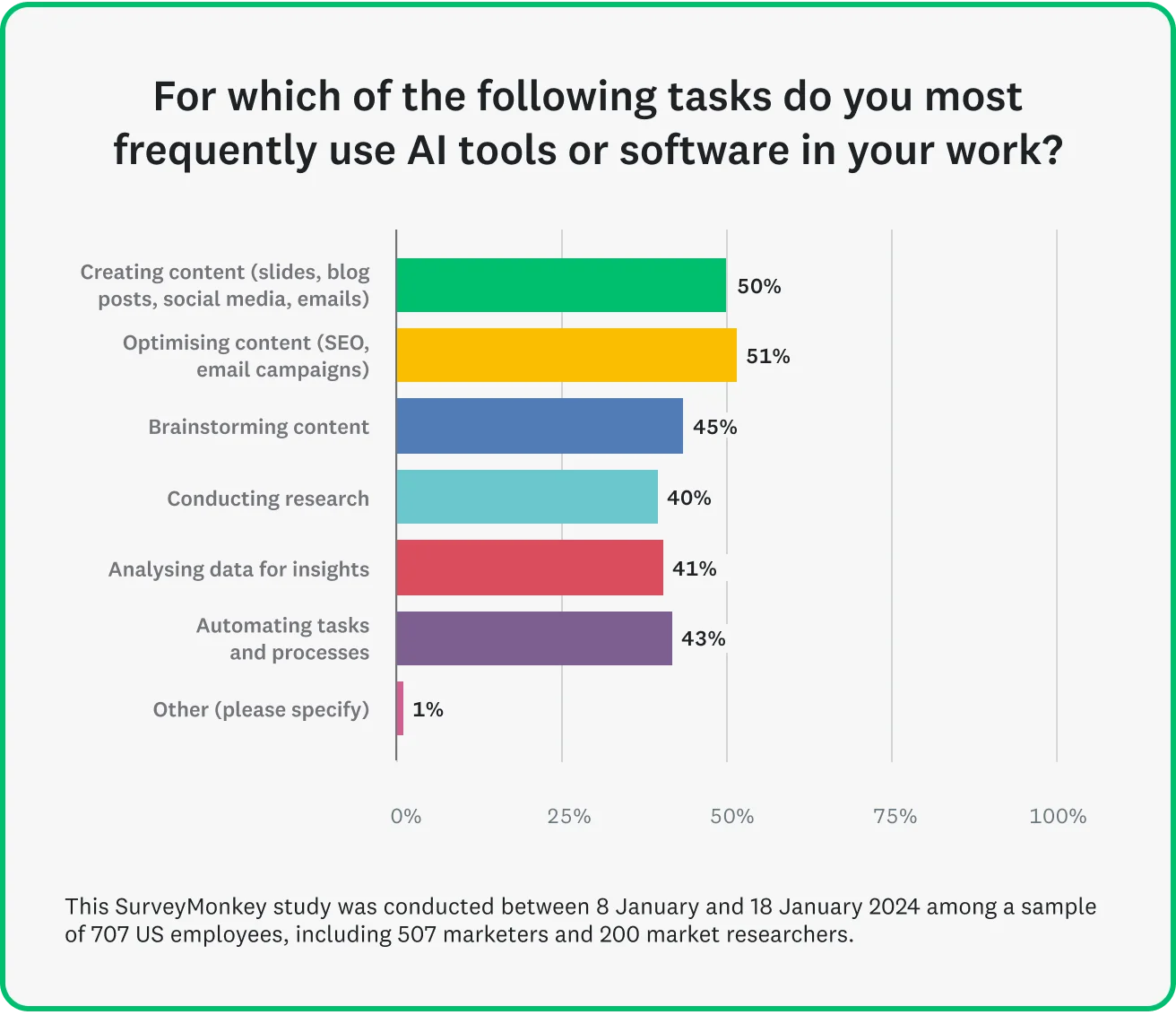

Modern AI is very good at:

- Counting engagement at scale

- Grouping similar conversations

- Spotting anomalies

- Extracting recurring keywords

What it struggles with is the connective tissue between those numbers.

A single “lol” can mean delight. It can mean tension. It can mean “I don’t agree with you, but I don’t want to escalate this.”

The system sees a positive token. But a human reads the room.

Major benchmarking reports continue to note gaps in social reasoning and contextual robustness, including findings from Stanford’s AI Index. The issue isn’t speed or computing. It’s interpretation across shifting contexts.

And context is everything online.

Human Emotion and Tone Analysis

Sarcasm is the obvious example. It’s also the simplest one.

AI sees “love this update” and tags it as positive. A human hears the eye-roll. Especially when it’s paired with quotes around seamless or a reply chain full of bug reports.

Picture launch threads where automated dashboards reported “strong positive sentiment.” Meanwhile, support tickets were climbing, and power users were subtly warning each other in comments.

That gap is expensive.

Bryan Henry, President of PeterMD, sees this firsthand in health conversations online.

He says, “On testosterone and men’s health forums, you’ll see a lot of ‘I’m fine’ language. But if you read the full thread, it’s usually hesitation, confusion, or fear about side effects. A dashboard might label it neutral. A human sees uncertainty. If you respond with a hard sell, you lose trust. If you respond with education, you build it.”

It’s not just sarcasm. It’s roast culture. Playful criticism that signals loyalty, not anger.

Or the opposite. Polite phrasing that hides real churn risk.

We’re also deep into multimodal nuance. A caption that reads positive next to a stitched video that completely flips the meaning. AI vision models are improving, but they still miss tonal mismatch in edge cases.

One practical fix that works: dual-pass sentiment analysis.

Let the model do the first sweep for speed. Then review a stratified sample manually, especially:

- Posts with quotation marks

- Elongated words

- Heavy emoji usage

- Creator-led content where tone drives influence

An hour of human review often corrects a surprising number of misreads. This is where AI consulting services add real value.

Cultural Context and Local Nuances

Language is local.

The thumbs-up emoji is broadly positive in many markets. In parts of the Middle East, it can be read as dismissive or rude, as cross-cultural research has documented. A phrase that feels playful in the US might feel confrontational elsewhere.

Global models flatten those edges.

Automated systems may flag a “spicy” product review in Southeast Asia as negative because of direct phrasing. Locally, the audience read it as enthusiastic and honest.

That misclassification changes your response strategy.

Matthew Thompson, Founder of OwnerWebs, works with home service businesses whose reputations live or die at the local level.

“In one market, customers leave detailed five-paragraph reviews,” he notes. “In another, they’ll just post a short comment in a neighborhood Facebook group and never tag the business directly.

If you only track formal reviews, you think sentiment is flat. But when you monitor community groups, Nextdoor threads, and referral conversations, you see how tone actually shifts. That context changes how a contractor responds, whether they clarify pricing, explain timelines, or just stay quiet.”

Dialects, code-switching, borrowed slang, these aren’t corner cases anymore. They’re standard behavior in digital spaces.

When campaigns cross markets, regional strategists catch the tone shifts. They know when a meme is harmless and when it’s politically loaded. They hear what isn’t being said.

And they prevent avoidable mistakes.

The same pattern applies to highly localized services like licensed electricians in St. Louis. Conversations around electrical work tend to be precise and practical. People ask about permits, timelines, and code compliance.

They don’t joke. They don’t overreact. If you mistake that direct tone for low engagement, you misread the room. In categories tied to safety and trust, restraint often signals seriousness, not indifference.

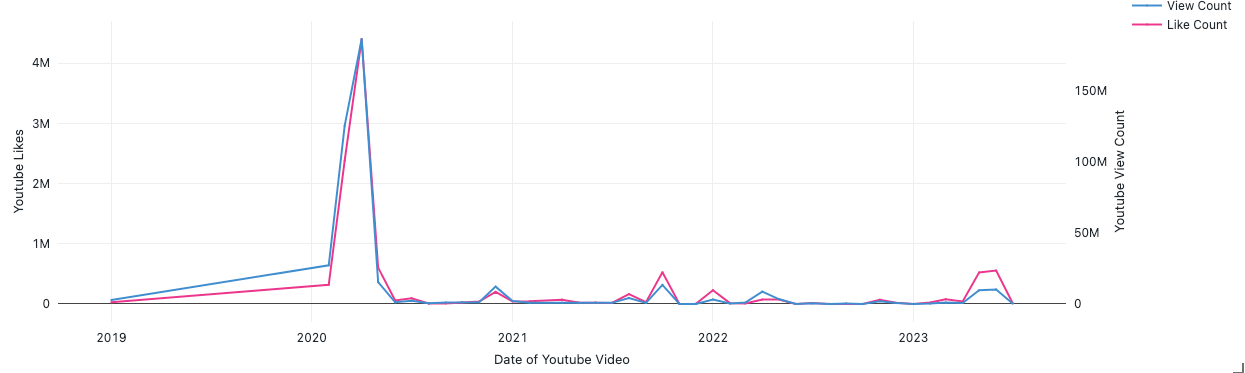

Microtrends and Community Dynamics

Microtrends start small. A phrase inside a Discord. A recurring aesthetic in a Subreddit. A creator format that hasn’t hit mainstream feeds yet.

By the time there’s enough data for a model to label something a trend, you’re probably late.

TikTok aesthetics are a good example. Some cycles now rise and burn out within weeks, as coverage of short-lived fashion and content cycles has shown. If you wait for statistically significant volume, the window to be early is gone.

Strategists who are embedded in communities spot shifts before they’re measurable. They notice:

- Slight changes in comment language

- New inside jokes

- Subtle frustration building under the surface

That early detection window is where advantage lives.

Wade O’Shea, Founder at BusCharter.com.au, spends time inside creative communities where shifts are subtle before they’re visible at scale.

“In creator spaces, the change doesn’t start with volume,” he explains. “It starts with repetition. A certain caption style appears more often. People shift from polished storytelling to something more raw or more ironic. If you’re embedded in the conversation, you feel that shift before it shows up in data. By the time a tool flags it as a trend, the community has already moved to the next variation.”

Unexpected Insights from Human Interaction

Some signals never show up in dashboards.

They show up in DMs. In support calls. In live chats. In the way someone phrases a caption about your product without tagging you.

AI can summarize text. It doesn’t follow up.

Jeff Zhou, CEO of Fig Loans, says the most meaningful signals often look ordinary. “When someone replies ‘appreciate this’ to a post about credit building, it doesn’t move a dashboard,” he said. “But if you look at their follow-up comments over the next few weeks, you’ll often see more specific questions, about repayment timing, about what gets reported to bureaus.

That progression matters. It tells you someone is moving from curiosity to consideration. If you treat those early comments as noise, you miss the intent building underneath.”

A customer posts a photo with a vague caption. The model extracts keywords. A human asks a question and uncovers a workaround, a frustration, or a use case you didn’t design for.

This shows up clearly in seasonal industries. A company offering HVAC maintenance and repair services might notice a pattern of short, urgent comments during extreme weather: “still waiting,” “any update,” “fixed yet?” On their own, those posts don’t look strategic. But taken together, they reveal anxiety around response time and reliability.

Qualitative research has consistently shown this gap. Field studies and contextual interviews, as documented by Nielsen Norman Group over the years, uncover needs that passive analytics miss, especially around emotional drivers and real-world usage.

If you only listen at scale, you’ll miss:

- The quiet churn signals

- The private recommendations

- The micro-anxieties that never turn into public complaints

Those are often the signals that matter most.

Best Practices: Integrating AI and Human Expertise

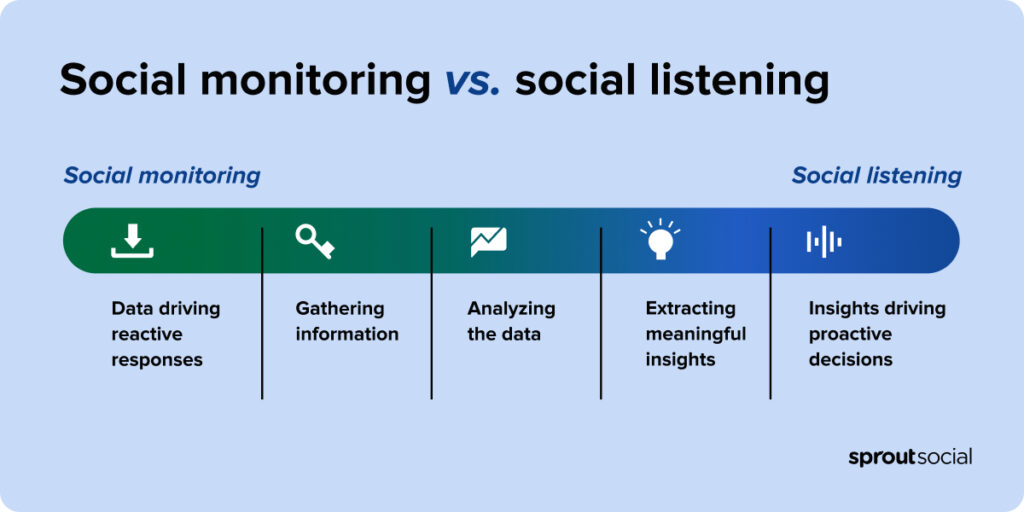

We’ll keep this very simple. Use AI for:

- Pattern detection

- Topic clustering

- Early anomaly alerts

- Large-scale summarization

Use humans for:

- Tone interpretation

- Cultural validation

- Edge-case review

- Community immersion

Define clear review thresholds. If automated sentiment confidence drops below a certain level, route it to a human. If a post comes from a high-impact influencer, review it manually regardless of score.

Keep a living glossary of market-specific slang and references. Assign strategists to edge communities where microtrends start. Pair social listening with short qualitative touchpoints: interviews, creator roundtables, community panels.

Then track outcomes.

Fewer reactive crises. Faster pivots. Creative that resonates on the first pass.

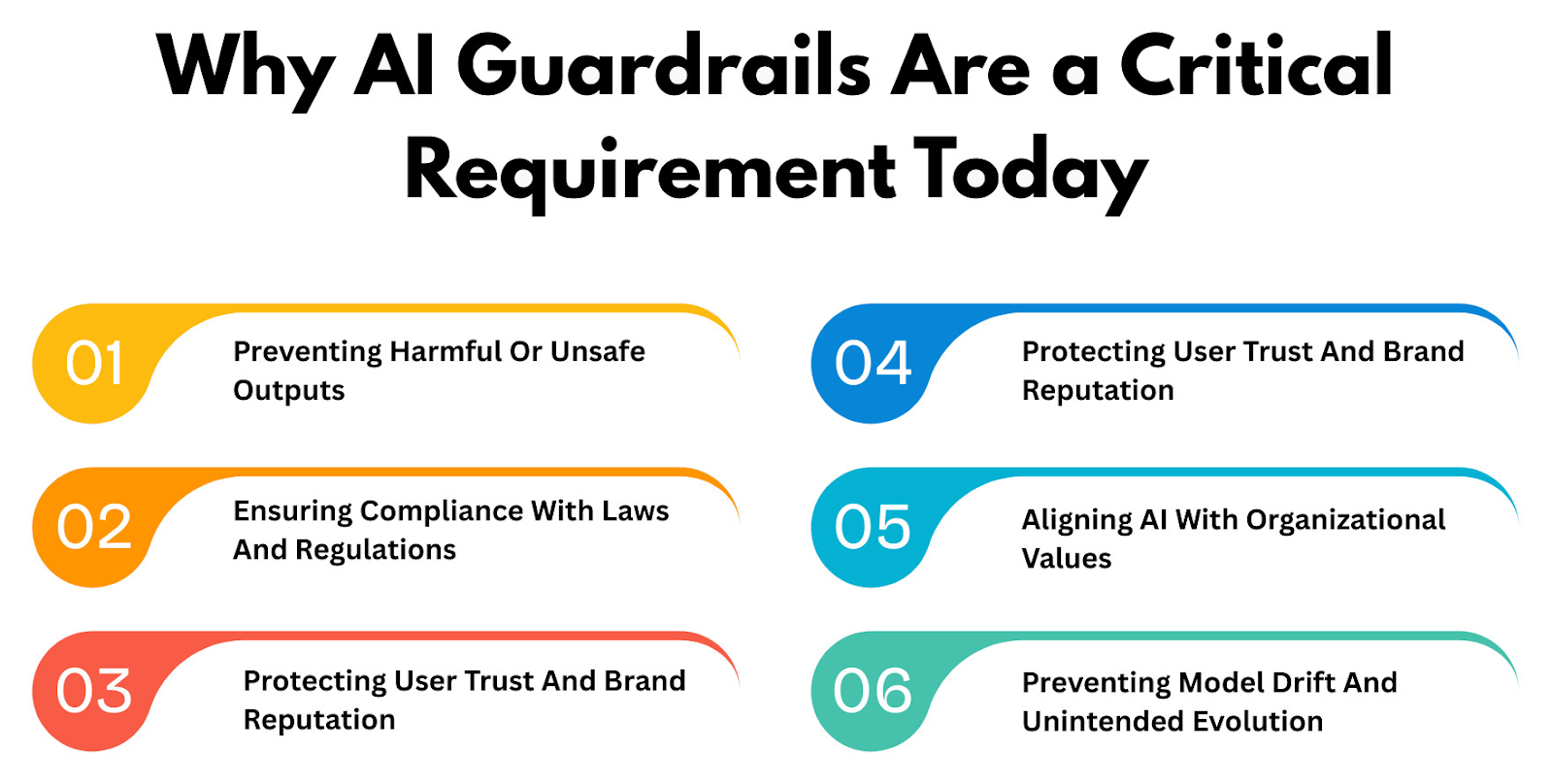

Research on AI-enabled organizations, including discussions in Harvard Business Review, points to the same principle: cross-functional teams and clear human decision rights matter. AI performs best when paired with accountable human oversight, an approach strongly supported by an OpenAI software consultancy that helps organizations integrate AI responsibly while maintaining clear governance and accountability

Treat AI like a junior analyst with incredible stamina. Give it guardrails. Teach it where it’s wrong.

The Future of Social Signal Analysis in Marketing

AI is sharper than it was two years ago. It can scan multimodal content and summarize an entire day of conversation in minutes.

That’s useful.

But the signals that move brands still sit just beyond clean automation. The wink in a caption. The shift in a subculture. The regional joke you either get, or you don’t.

Let AI handle the heavy lifting. Free your team to do the human work: feeling the room, noticing tension, asking better questions.

The edge in 2026 comes from better judgment layered on top of them.

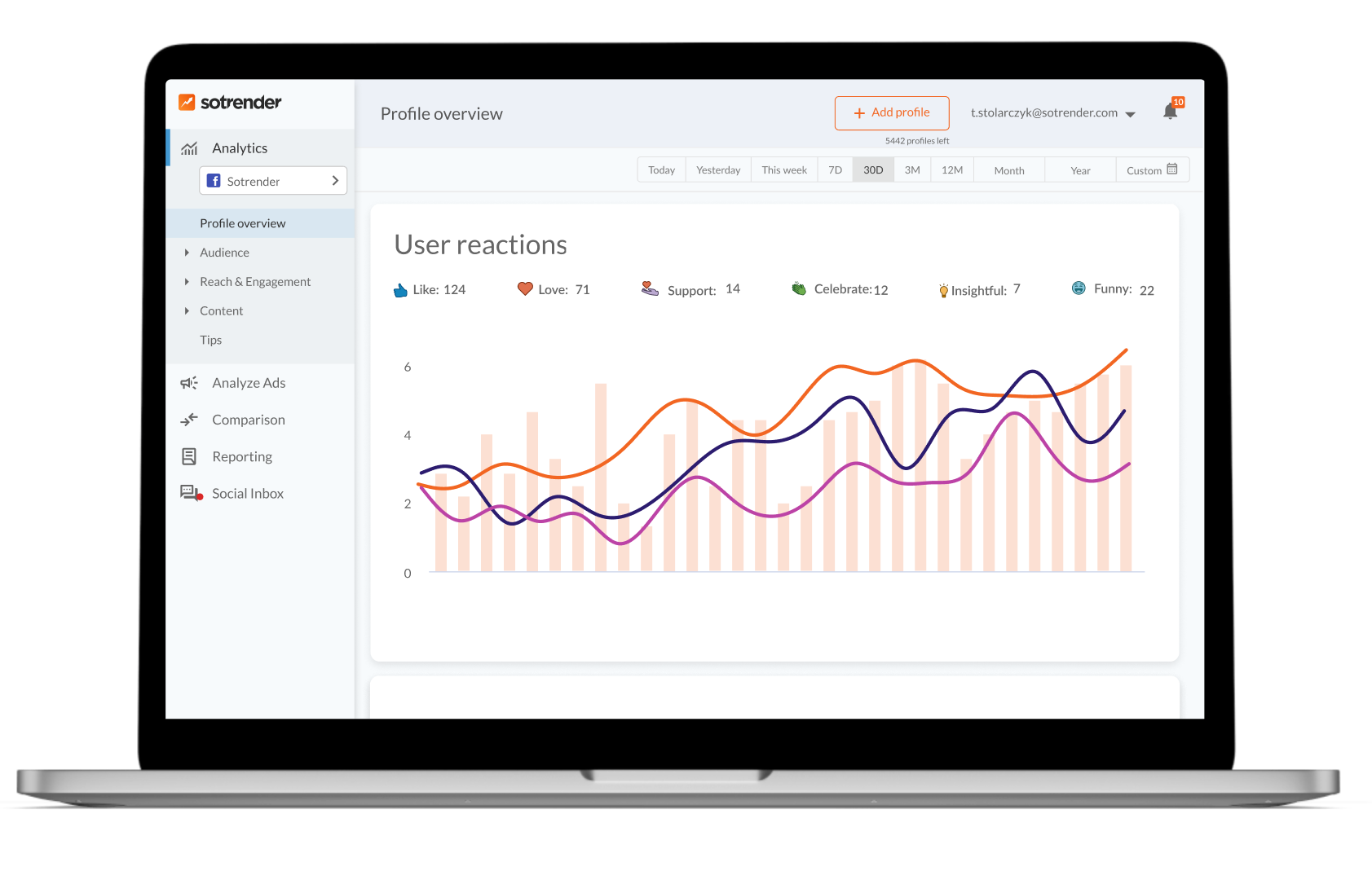

If you’re serious about improving how you interpret social signals, not just counting them, it helps to see how different tools surface the data in the first place.

To explore analytics that go beyond surface-level metrics and help you track performance across platforms, try Sotrender.